Ansys Learning Forum › Forums › Installation and Licensing › Ansys Free Student Software › Distributed solver › Reply To: Distributed solver

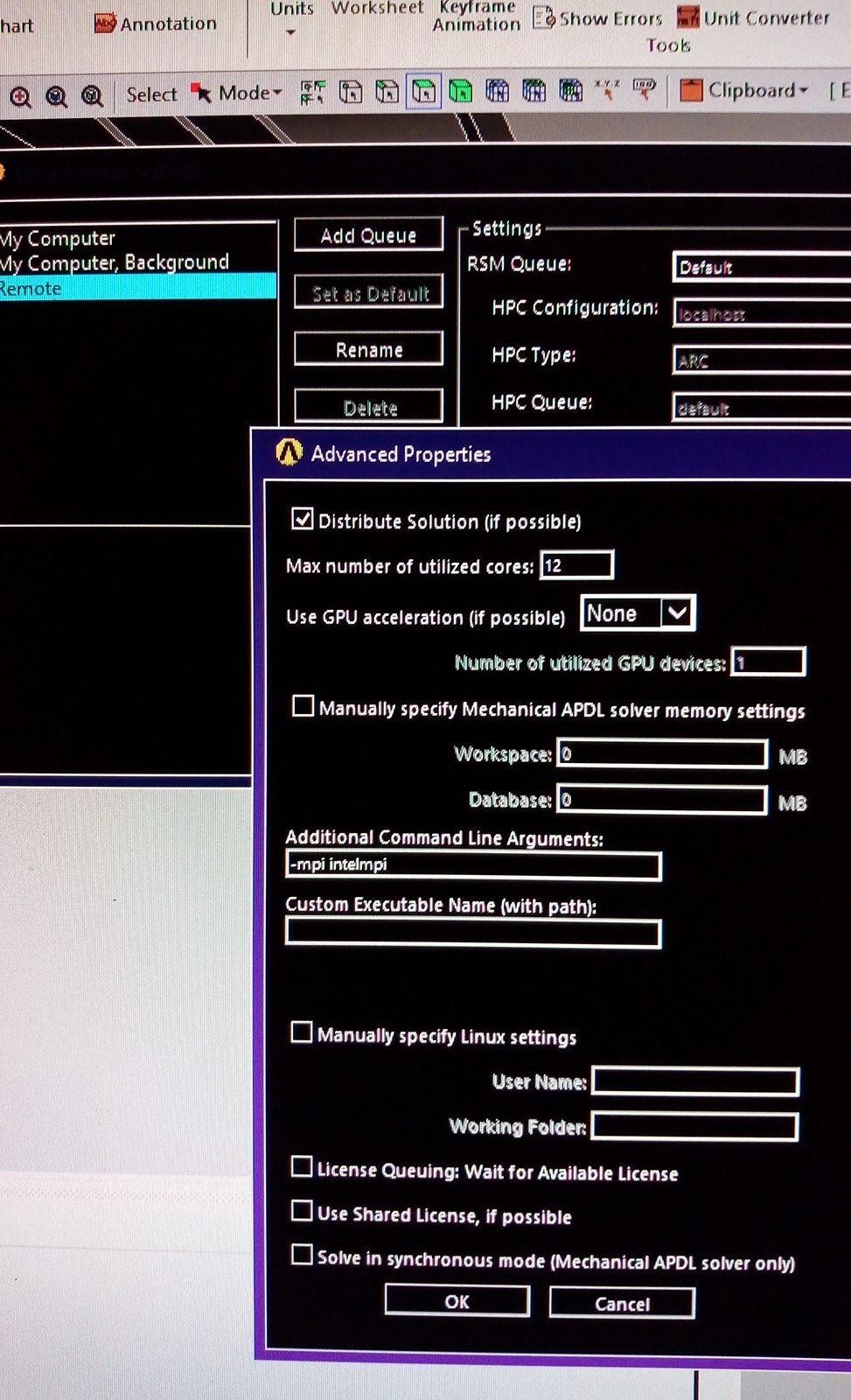

Yesterday I was playing with Intel MPI as previously I've seen some load on solvers with it. The screenshot with Solve Process Settings and Advanced tab is below.

So I've tried with -mpi intelmpi argument and without. The result was the same, i.e. the solving process started on all machines, CPU load was 100% but only for a couple of minutes, then I got MPI_Abort error (full error message is also below).

Note: Regarding that Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1 message it's weird as folder actually exists and accessible by solvers. Not sure why it has wrong slashes here.

RSMStaging/aokqqj0p.5k1 message it's weird as folder actually exists and accessible by solvers. Not sure why it has wrong slashes here.

Also, I do have NVidia Quadro GPU in each machine. Is it reasonable to enable GPU Acceleration to improve performance?

Error message (omitted):

send of 20 bytes failed.

Command Exit Code: 99

application called MPI_Abort(MPI_COMM_WORLD, 99) - process 0

[mpiexec@Maincad] ..hydrautilssocksock.c (420): write error (Unknown error)

[mpiexec@Maincad] ..hydrautilslaunchlaunch.c (121): shutdown failed, sock 648, error 10093

ClusterJobs Command Exit Code: 99

Saving exit code file: C:RSMStagingaokqqj0p.5k1exitcode_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

Exit code file: C:RSMStagingaokqqj0p.5k1exitcode_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout has been created.

Saving exit code file: C:RSMStagingaokqqj0p.5k1exitcodeCommands_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

Exit code file: C:RSMStagingaokqqj0p.5k1exitcodeCommands_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout has been created.

=======SOLVE.OUT FILE======

solve --> *** FATAL ***

solve --> Attempt to run ANSYS in a distributed mode failed.

solve --> The Distributed ANSYS process with MPI Rank ID = 4 is not responding.

solve --> Please refer to the Distributed ANSYS Guide for detailed setup and configuration information.

ClusterJobs Exiting with code: 99

Individual Command Exit Codes are: [99]

Full error log:

RSM Version: 19.5.420.0, Build Date: 30/07/2019 5![]() 7:06 am

7:06 am

RSM File Version: 19.5.0-beta.3+Branch.release-19.5.Sha.9c15e0a3e0a608e8efe8c568e494d2160788d025

RSM Library Version: 19.5.0.0

Job Name: Annulus_Thread_MAX_Sludge-SidWalls=No Separation,rhoSludge=2,3,t=0-DP0-Model (B4)-Static Structural (B5)-Solution (B6)

Type: Mechanical_ANSYS

Client Directory: C:TestProject_ProjectScratchScr6531

Client Machine: Maincad

Queue: Default [localhost, default]

Template: Mechanical_ANSYSJob.xml

Cluster Configuration: localhost [localhost]

Cluster Type: ARC

Custom Keyword: blank

Transfer Option: NativeOS

Staging Directory: C:RSMStaging

Delete Staging Directory: True

Local Scratch Directory: blank

Platform: Windows

Cluster Submit Options: blank

Normal Inputs: [*.dat,file*.*,*.mac,thermal.build,commands.xml,SecInput.txt]

Cancel Inputs: [file.abt,sec.interrupt]

Excluded Inputs: [-]

Normal Outputs: [*.xml,*.NR*,CAERepOutput.xml,cyclic_map.json,exit.topo,file*.dsub,file*.ldhi,file*.png,file*.r0*,file*.r1*,file*.r2*,file*.r3*,file*.r4*,file*.r5*,file*.r6*,file*.r7*,file*.r8*,file*.r9*,file*.rd*,file*.rst,file.BCS,file.ce,file.cm,file.cnd,file.cnm,file.DSP,file.err,file.gst,file.json,file.nd*,file.nlh,file.nr*,file.PCS,file.rdb,file.rfl,file.spm,file0.BCS,file0.ce,file0.cnd,file0.err,file0.gst,file0.nd*,file0.nlh,file0.nr*,file0.PCS,frequencies_*.out,input.x17,intermediate*.topo,Mode_mapping_*.txt,NotSupportedElems.dat,ObjectiveHistory.out,post.out,PostImage*.png,record.txt,solve*.out,topo.err,top![]() ut,vars.topo,SecDebugLog.txt,secStart.log,sec.validation.executed,sec.envvarvalidation.executed,sec.failure]

ut,vars.topo,SecDebugLog.txt,secStart.log,sec.validation.executed,sec.envvarvalidation.executed,sec.failure]

Failure Outputs: [*.xml,*.NR*,CAERepOutput.xml,cyclic_map.json,exit.topo,file*.dsub,file*.ldhi,file*.png,file*.r0*,file*.r1*,file*.r2*,file*.r3*,file*.r4*,file*.r5*,file*.r6*,file*.r7*,file*.r8*,file*.r9*,file*.rd*,file*.rst,file.BCS,file.ce,file.cm,file.cnd,file.cnm,file.DSP,file.err,file.gst,file.json,file.nd*,file.nlh,file.nr*,file.PCS,file.rdb,file.rfl,file.spm,file0.BCS,file0.ce,file0.cnd,file0.err,file0.gst,file0.nd*,file0.nlh,file0.nr*,file0.PCS,frequencies_*.out,input.x17,intermediate*.topo,Mode_mapping_*.txt,NotSupportedElems.dat,ObjectiveHistory.out,post.out,PostImage*.png,record.txt,solve*.out,topo.err,top![]() ut,vars.topo,SecDebugLog.txt,sec.solverexitcode,secStart.log,sec.failure,sec.envvarvalidation.executed]

ut,vars.topo,SecDebugLog.txt,sec.solverexitcode,secStart.log,sec.failure,sec.envvarvalidation.executed]

Cancel Outputs: [file*.err,solve.out,secStart.log,SecDebugLog.txt]

Excluded Outputs: [-]

Inquire Files:

normal: [*.xml,*.NR*,CAERepOutput.xml,cyclic_map.json,exit.topo,file*.dsub,file*.ldhi,file*.png,file*.r0*,file*.r1*,file*.r2*,file*.r3*,file*.r4*,file*.r5*,file*.r6*,file*.r7*,file*.r8*,file*.r9*,file*.rd*,file*.rst,file.BCS,file.ce,file.cm,file.cnd,file.cnm,file.DSP,file.err,file.gst,file.json,file.nd*,file.nlh,file.nr*,file.PCS,file.rdb,file.rfl,file.spm,file0.BCS,file0.ce,file0.cnd,file0.err,file0.gst,file0.nd*,file0.nlh,file0.nr*,file0.PCS,frequencies_*.out,input.x17,intermediate*.topo,Mode_mapping_*.txt,NotSupportedElems.dat,ObjectiveHistory.out,post.out,PostImage*.png,record.txt,solve*.out,topo.err,top![]() ut,vars.topo,SecDebugLog.txt,secStart.log,sec.validation.executed,sec.envvarvalidation.executed,sec.failure]

ut,vars.topo,SecDebugLog.txt,secStart.log,sec.validation.executed,sec.envvarvalidation.executed,sec.failure]

cancel: [file*.err,solve.out,secStart.log,SecDebugLog.txt]

failure: [*.xml,*.NR*,CAERepOutput.xml,cyclic_map.json,exit.topo,file*.dsub,file*.ldhi,file*.png,file*.r0*,file*.r1*,file*.r2*,file*.r3*,file*.r4*,file*.r5*,file*.r6*,file*.r7*,file*.r8*,file*.r9*,file*.rd*,file*.rst,file.BCS,file.ce,file.cm,file.cnd,file.cnm,file.DSP,file.err,file.gst,file.json,file.nd*,file.nlh,file.nr*,file.PCS,file.rdb,file.rfl,file.spm,file0.BCS,file0.ce,file0.cnd,file0.err,file0.gst,file0.nd*,file0.nlh,file0.nr*,file0.PCS,frequencies_*.out,input.x17,intermediate*.topo,Mode_mapping_*.txt,NotSupportedElems.dat,ObjectiveHistory.out,post.out,PostImage*.png,record.txt,solve*.out,topo.err,top![]() ut,vars.topo,SecDebugLog.txt,sec.solverexitcode,secStart.log,sec.failure,sec.envvarvalidation.executed]

ut,vars.topo,SecDebugLog.txt,sec.solverexitcode,secStart.log,sec.failure,sec.envvarvalidation.executed]

RemotePostInformation: [RemotePostInformation.txt]

SolutionInformation: [solve.out,file.gst,file.nlh,file0.gst,file0.nlh,file.cnd]

PostDuringSolve: [file.rcn,file.redm,file.rfl,file.rfrq,file.rmg,file.rdsp,file.rsx,file.rst,file.rth,solve.out,file.gst,file.nlh,file0.gst,file0.nlh,file.cnd]

transcript: [solve.out,monitor.json]

Submission in progress...

Created storage directory C:RSMStagingaokqqj0p.5k1

Runtime Settings:

Job Owner: MAINCADUser

Submit Time: Tuesday, 8 October 2019 11:40 pm

Directory: C:RSMStagingaokqqj0p.5k1

Uploading file: C:TestProject_ProjectScratchScr6531ds.dat

Uploading file: C:TestProject_ProjectScratchScr6531remote.dat

Uploading file: C:TestProject_ProjectScratchScr6531commands.xml

Uploading file: C:TestProject_ProjectScratchScr6531SecInput.txt

39898.74 KB, .08 sec (498807.21 KB/sec)

Submission in progress...

JobType is: Mechanical_ANSYS

Final command platform: Windows

RSM_PYTHON_HOME=C:Program FilesANSYS Incv195commonfilesCPython2_7_15winx64Releasepython

RSM_HPC_JOBNAME=Mechanical

Distributed mode requested: True

RSM_HPC_DISTRIBUTED=TRUE

Running 1 commands

Job working directory: C:RSMStagingaokqqj0p.5k1

Number of CPU requested: 12

AWP_ROOT195=C:Program FilesANSYS Incv195

Checking queue default exists ...

JobId was parsed as: 4

Job submission was successful.

Job is running on hostname Maincad

Job user from this host: User

Starting directory: C:RSMStagingaokqqj0p.5k1

Reading control file C:RSMStagingaokqqj0p.5k1control_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsm ....

Correct Cluster verified

Cluster Type: ARC

Underlying Cluster: ARC

RSM_CLUSTER_TYPE = ARC

Compute Server is running on MAINCAD

Reading commands and arguments...

Command 1: %AWP_ROOT195%SECSolverExecutionControllerrunsec.bat, arguments: , redirectFile: None

Running from shared staging directory ...

RSM_USE_LOCAL_SCRATCH = False

RSM_LOCAL_SCRATCH_DIRECTORY =

RSM_LOCAL_SCRATCH_PARTIAL_UNC_PATH =

Cluster Shared Directory: C:RSMStagingaokqqj0p.5k1

RSM_SHARE_STAGING_DIRECTORY = C:RSMStagingaokqqj0p.5k1

Job file clean up: True

Use SSH on Linux cluster nodes: False

RSM_USE_SSH_LINUX = False

LivelogFile: NOLIVELOGFILE

StdoutLiveLogFile: stdout_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.live

StderrLiveLogFile: stderr_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.live

Reading input files...

*.dat

file*.*

*.mac

thermal.build

commands.xml

SecInput.txt

Reading cancel files...

file.abt

sec.interrupt

Reading output files...

*.xml

*.NR*

CAERepOutput.xml

cyclic_map.json

exit.topo

file*.dsub

file*.ldhi

file*.png

file*.r0*

file*.r1*

file*.r2*

file*.r3*

file*.r4*

file*.r5*

file*.r6*

file*.r7*

file*.r8*

file*.r9*

file*.rd*

file*.rst

file.BCS

file.ce

file.cm

file.cnd

file.cnm

file.DSP

file.err

file.gst

file.json

file.nd*

file.nlh

file.nr*

file.PCS

file.rdb

file.rfl

file.spm

file0.BCS

file0.ce

file0.cnd

file0.err

file0.gst

file0.nd*

file0.nlh

file0.nr*

file0.PCS

frequencies_*.out

input.x17

intermediate*.topo

Mode_mapping_*.txt

NotSupportedElems.dat

ObjectiveHistory.out

post.out

PostImage*.png

record.txt

solve*.out

topo.err

vars.topo

SecDebugLog.txt

secStart.log

sec.validation.executed

sec.envvarvalidation.executed

sec.failure

*.xml

*.NR*

CAERepOutput.xml

cyclic_map.json

exit.topo

file*.dsub

file*.ldhi

file*.png

file*.r0*

file*.r1*

file*.r2*

file*.r3*

file*.r4*

file*.r5*

file*.r6*

file*.r7*

file*.r8*

file*.r9*

file*.rd*

file*.rst

file.BCS

file.ce

file.cm

file.cnd

file.cnm

file.DSP

file.err

file.gst

file.json

file.nd*

file.nlh

file.nr*

file.PCS

file.rdb

file.rfl

file.spm

file0.BCS

file0.ce

file0.cnd

file0.err

file0.gst

file0.nd*

file0.nlh

file0.nr*

file0.PCS

frequencies_*.out

input.x17

intermediate*.topo

Mode_mapping_*.txt

NotSupportedElems.dat

ObjectiveHistory.out

post.out

PostImage*.png

record.txt

solve*.out

topo.err

vars.topo

SecDebugLog.txt

sec.solverexitcode

secStart.log

sec.failure

sec.envvarvalidation.executed

Reading exclude files...

stdout_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

stderr_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

control_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsm

hosts.dat

exitcode_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

exitcodeCommands_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

stdout_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.live

stderr_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.live

ClusterJobCustomization.xml

ClusterJobs.py

clusterjob_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.sh

clusterjob_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.bat

inquire.request

inquire.confirm

request.upload.rsm

request.download.rsm

wait.download.rsm

scratch.job.rsm

volatile.job.rsm

restart.xml

cancel_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

liveLogLastPositions_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsm

stdout_782e78b5-2c00-45fa-b7ac-6976aa49c2cf_kill.rsmout

stderr_782e78b5-2c00-45fa-b7ac-6976aa49c2cf_kill.rsmout

sec.interrupt

stdout_782e78b5-2c00-45fa-b7ac-6976aa49c2cf_*.rsmout

stderr_782e78b5-2c00-45fa-b7ac-6976aa49c2cf_*.rsmout

stdout_task_*.live

stderr_task_*.live

control_task_*.rsm

stdout_task_*.rsmout

stderr_task_*.rsmout

exitcode_task_*.rsmout

exitcodeCommands_task_*.rsmout

file.abt

Reading environment variables...

RSM_IRON_PYTHON_HOME = C:Program FilesANSYS Incv195commonfilesIronPython

RSM_TASK_WORKING_DIRECTORY = C:RSMStagingaokqqj0p.5k1

RSM_USE_SSH_LINUX = False

RSM_QUEUE_NAME = default

RSM_CONFIGUREDQUEUE_NAME = Default

RSM_COMPUTE_SERVER_MACHINE_NAME = Maincad

RSM_HPC_JOBNAME = Mechanical

RSM_HPC_DISPLAYNAME = Annulus_Thread_MAX_Sludge-SidWalls=No Separation,rhoSludge=2,3,t=0-DP0-Model (B4)-Static Structural (B5)-Solution (B6)

RSM_HPC_CORES = 12

RSM_HPC_DISTRIBUTED = TRUE

RSM_HPC_NODE_EXCLUSIVE = FALSE

RSM_HPC_QUEUE = default

RSM_HPC_USER = MAINCADUser

RSM_HPC_WORKDIR = C:RSMStagingaokqqj0p.5k1

RSM_HPC_JOBTYPE = Mechanical_ANSYS

RSM_HPC_ANSYS_LOCAL_INSTALL_DIRECTORY = C:Program FilesANSYS Incv195

RSM_HPC_VERSION = 195

RSM_HPC_STAGING = C:RSMStagingaokqqj0p.5k1

RSM_HPC_LOCAL_PLATFORM = Windows

RSM_HPC_CLUSTER_TARGET_PLATFORM = Windows

RSM_HPC_STDOUTFILE = stdout_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

RSM_HPC_STDERRFILE = stderr_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

RSM_HPC_STDOUTLIVE = stdout_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.live

RSM_HPC_STDERRLIVE = stderr_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.live

RSM_HPC_SCRIPTS_DIRECTORY_LOCAL = C:Program FilesANSYS Incv195RSMConfigscripts

RSM_HPC_SCRIPTS_DIRECTORY = C:Program FilesANSYS Incv195RSMConfigscripts

RSM_HPC_SUBMITHOST = localhost

RSM_HPC_STORAGEID =

RSM_HPC_PLATFORMSTORAGEID = C:RSMStagingaokqqj0p.5k1

RSM_HPC_NATIVEOPTIONS =

ARC_ROOT = C:Program FilesANSYS Incv195RSMConfigscripts....ARC

RSM_HPC_KEYWORD = ARC

RSM_PYTHON_LOCALE = en-us

Reading AWP_ROOT environment variable name ...

AWP_ROOT environment variable name is: AWP_ROOT195

Reading Low Disk Space Warning Limit ...

Low disk space warning threshold set at: 2.0GiB

Reading File identifier ...

File identifier found as: 782e78b5-2c00-45fa-b7ac-6976aa49c2cf

Done reading control file.

RSM_AWP_ROOT_NAME = AWP_ROOT195

AWP_ROOT195 install directory: C:Program FilesANSYS Incv195

RSM_MACHINES = maincad:4:cluster01:4:cluster02:4

Number of nodes assigned for current job = 3

Machine list:

Checking DisableUNCCheck ...

Unable to read registry for HKEY_CURRENT_USERSoftwareMicrosoftCommand Processor

Ignore verifying DisableUNCCheck, you may check it manually if not already done so.

Start running job commands ...

Running on machine : Maincad

Current Directory: C:RSMStagingaokqqj0p.5k1

Running command: C:Program FilesANSYS Incv195SECSolverExecutionControllerrunsec.bat

Redirecting output to None

Final command arg list :

Running Process

Running Solver : C:Program FilesANSYS Incv195ansysbinwinx64ANSYS195.exe -b nolist -s noread -acc nvidia -na 1 -p ansys -mpi intelmpi -i remote.dat -o solve.out -dis -machines maincad:4:cluster01:4:cluster02:4 -dir "C![]() RSMStaging/aokqqj0p.5k1"

RSMStaging/aokqqj0p.5k1"

*** The AWP_ROOT195 environment variable is set to: C:Program FilesANSYS Incv195

*** The AWP_LOCALE195 environment variable is set to: en-us

*** The ANSYS195_DIR environment variable is set to: C:Program FilesANSYS Incv195ANSYS

*** The ANSYS_SYSDIR environment variable is set to: winx64

*** The ANSYS_SYSDIR32 environment variable is set to: intel

*** The CADOE_DOCDIR195 environment variable is set to: C:Program FilesANSYS Incv195CommonFileshelpen-ussolviewer

*** The CADOE_LIBDIR195 environment variable is set to: C:Program FilesANSYS Incv195CommonFilesLanguageen-us

*** The LSTC_LICENSE environment variable is set to: ANSYS

*** The P_SCHEMA environment variable is set to: C:Program FilesANSYS Incv195AISOLCADIntegrationParasolidPSchema

*** The TEMP environment variable is set to: C:UsersUserAppDataLocalTemp

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

[-acc nvidia] [-na #]

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

[-acc nvidia] [-na #]

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

[-acc nvidia] [-na #]

[-acc nvidia] [-na #]

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

[-acc nvidia] [-na #]

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

[-acc nvidia] [-na #]

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

[-acc nvidia] [-na #]

*** FATAL ***

Invalid working directory value= C![]() RSMStaging/aokqqj0p.5k1

RSMStaging/aokqqj0p.5k1

(specified working directory does not exist)

*** NOTE ***

USAGE: C:Program FilesANSYS Incv195ANSYSbinwinx64ANSYS.EXE

[-d device name] [-j job name]

[-b list|nolist] [-m scratch memory(mb)]

[-s read|noread] [-g] [-db database(mb)]

[-p product] [-l language]

[-dyn] [-np #] [-mfm]

[-dvt] [-dis] [-machines list]

[-i inputfile] [-o outputfile]

[-ser port] [-scport port]

[-scname couplingname] [-schost hostname]

[-smp] [-mpi intelmpi|ibmmpi|msmpi]

[-dir working_directory ]

[-acc nvidia] [-na #]

send of 20 bytes failed.

Command Exit Code: 99

application called MPI_Abort(MPI_COMM_WORLD, 99) - process 0

[mpiexec@Maincad] ..hydrautilssocksock.c (420): write error (Unknown error)

[mpiexec@Maincad] ..hydrautilslaunchlaunch.c (121): shutdown failed, sock 648, error 10093

ClusterJobs Command Exit Code: 99

Saving exit code file: C:RSMStagingaokqqj0p.5k1exitcode_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

Exit code file: C:RSMStagingaokqqj0p.5k1exitcode_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout has been created.

Saving exit code file: C:RSMStagingaokqqj0p.5k1exitcodeCommands_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout

Exit code file: C:RSMStagingaokqqj0p.5k1exitcodeCommands_782e78b5-2c00-45fa-b7ac-6976aa49c2cf.rsmout has been created.

=======SOLVE.OUT FILE======

solve --> *** FATAL ***

solve --> Attempt to run ANSYS in a distributed mode failed.

solve --> The Distributed ANSYS process with MPI Rank ID = 4 is not responding.

solve --> Please refer to the Distributed ANSYS Guide for detailed setup and configuration information.

ClusterJobs Exiting with code: 99

Individual Command Exit Codes are: [99]